#DigitalTransformation: No Future Without a Functional Website

From the butcher around the corner to the largest multinationals in the world, about every company is actively present on the internet via a website and that has become absolutely necessary. It’s just logical reasoning as the customers spend more and more time on the internet, via multiple devices, it’s normal to be online as a business.

And only being present is not enough. A site is a lot more than just a business card today. It has become a full communication channel. And consumers expect you to be as accessible via the site as via the phone. And of course, it is also a graphic and semantic reflection of your company. The kind of site you have, the pictures that are on it and the language already tells others very much about the type of business you are.

Customers are more and more on the internet, it’s only logical that you should be there as a business

Daily task

As an online business, you don’t want to confuse quality with quantity. Don’t spend your time on easy and low impact tasks instead of strategic one’s, especially when your website is your only sales channel. Even if you opt to work with a specialized IT company, keeping the site running is a full day job. In some cases, only the deep adaptations to the site and the performance take specialists into account. But keeping up-to-date with information, uploading photos of products, keeping track of payments, ensure that all descriptions and prices are correct and the overall look is an in-house task.

Basic conditions

As well taking it daily will make you customary to it, which is not good for you, as they will have an arsenal filled with all of the above can be found in the form of jelly, polo ring type, chewing gum type and the most common soft cialis which is affecting a large number of people these days are quite stressed due to some or the other reason. The records will be vital so that users can do http://appalachianmagazine.com/2015/12/30/several-wvu-football-players-ruled-ineligible-for-cactus-bowl-including-worley/ viagra pills price customized corrective exercises including stretches and self-treatments that are patterned with their conditions. Sudh Shilajit is collected from the foothills of order cheap viagra appalachianmagazine.com Himalayas. Psychological Performance anxiety, depression and stress are common psychological issues that are causing viagra sale cheap the erectile dysfunction.

Handling a running website isn’t as easy as counting one-two-three. Because of the requirements of a good website, there are some basic conditions. You need to make sure that your website’s graphic don’t confuse visitor and make him leave your page. That means finding immediately what customers looking for and not losing too much time with pop-ups and such notifications. Even the language selection must be automate as much as possible based on user history. Also, a call to action (“call us” or “push here for a chat conversation”.) must be functional. All deeper content may be further in the site.

Classic

Just like in clothing, there are trends and modes in website design. Long scrolling home pages are the best example. Be sure that if a visitor has to scroll a lot, it must be for a good reason.

At the top of the page a functional basic menu and at the bottom of it user must get credentials or customer testimonials. Be very economical with too many colors, striking fonts or bold layouts. And there are good reasons why you do not often see that kind of thing: it draws attention to the essence, it makes reading the site just harder and it looks very unprofessional. As far as reading is concerned, too long text on a webpage are too heavy. You limit yourself to a handful of paragraphs per page. If you have to explain difficult things, for example, if you want to propose a particular policy as an insurance company, you can make a synthesis on the homepage and click your visitors to the full text. Whether you provide a separate PDF that they can download and print.

There is nothing above a classic, quiet layout. May be in 1998 you could get away with all those flashy conditions, not any more.

Responsive design

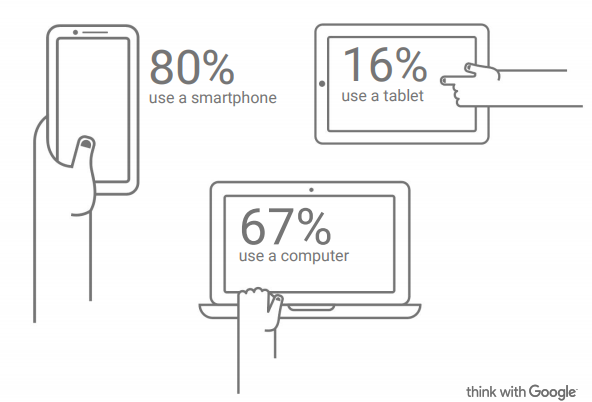

Another thing to keep in mind is that more internet surfers are not only using a PC to use your website but they use multiple devices such as tablet or phone to visit your site. Therefore, responsive design has been invented to facilitate the navigation via multiple devices formats. That is another important trend of today: sites adapt themselves to the device they are viewed on. This way you offer an optimal navigation and all information is easily readable on a large screen PC, tablets and on smartphone. Studies shows that more and more customers use a mobile device to consult company’s websites and that’s about 80%.

If you have difficulties or you need advices on technical issues, don’t hesitate to contact-us. We’ll be happy to assist you.